Every organization operates on a foundational assumption: the people acting on its behalf are, in fact, people. An employee approves a payment. A manager authorizes access. An executive signs off on a decision. Identity — knowing who did what, and whether they were authorized to do it — is the bedrock of accountability, compliance, and governance.

That assumption is no longer safe to make.

A New Kind of Actor Is Inside Your Organization

AI agents — software systems that take actions autonomously, call external services, read and write data, and make decisions without a human approving each step — are moving from experimental to operational across virtually every industry. They don’t log in the way a person logs in. They don’t have a face to recognize or a badge to swipe. And they multiply rapidly: a single workflow can spawn dozens of sub-agents, each acting on your behalf, each accessing your systems, often without appearing in any directory your security team manages.

Analysts estimate that non-human identities — agents, bots, automated services — already outnumber human employees in enterprise systems by 45 to 100 to one. Most organizations have no consolidated view of how many exist, what they can access, or who authorized them.1

This is not a future risk. It is a present condition.

The Old Model Assumed an Application as Gatekeeper

For decades, managing who could do what inside an organization was manageable because every action flowed through an application. The application itself was the control point — it enforced what operations were available, required approval for sensitive steps, and created a natural audit trail. The security team managed user accounts; the application managed what those accounts could do.

AI agents bypass that model entirely. They interact directly with data and services through programming interfaces, with no application in the middle to enforce limits. The controls that lived invisibly inside the application must now be explicitly designed, implemented, and monitored. Most organizations have not done this yet — because until recently, they didn’t need to.2

The Core Governance Questions Have No Good Answers Today

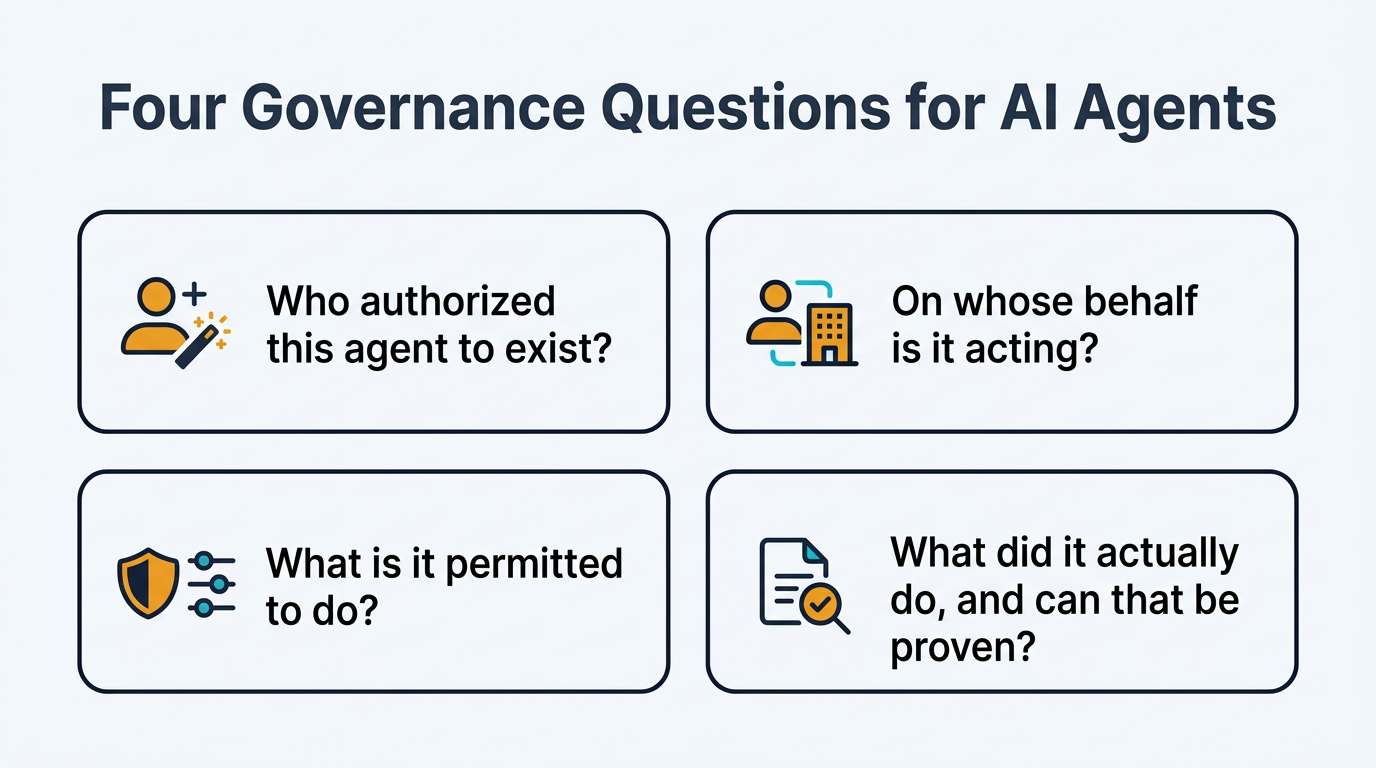

When an AI agent takes an action — moves funds, modifies a record, sends a communication, grants access to a system — your organization needs to be able to answer four questions:

Who authorized this agent to exist? Someone provisioned it. That person, and the organizational role they hold, must be traceable.

On whose behalf is it acting? An agent may be acting for a specific employee, for a department, or autonomously for the organization as a whole. Each carries different accountability.

What is it permitted to do? The scope of authorization must be defined and enforced — not assumed from the fact that the agent can technically reach a system.

What did it actually do, and can that be proven? For regulatory compliance, litigation, and incident response, the audit trail must be as robust for agent actions as for human ones.

Today, most organizations cannot confidently answer any of these questions for their AI agent populations. That is a governance gap with direct implications for regulatory compliance, fiduciary responsibility, and legal liability.

The Architecture That Closes the Gap

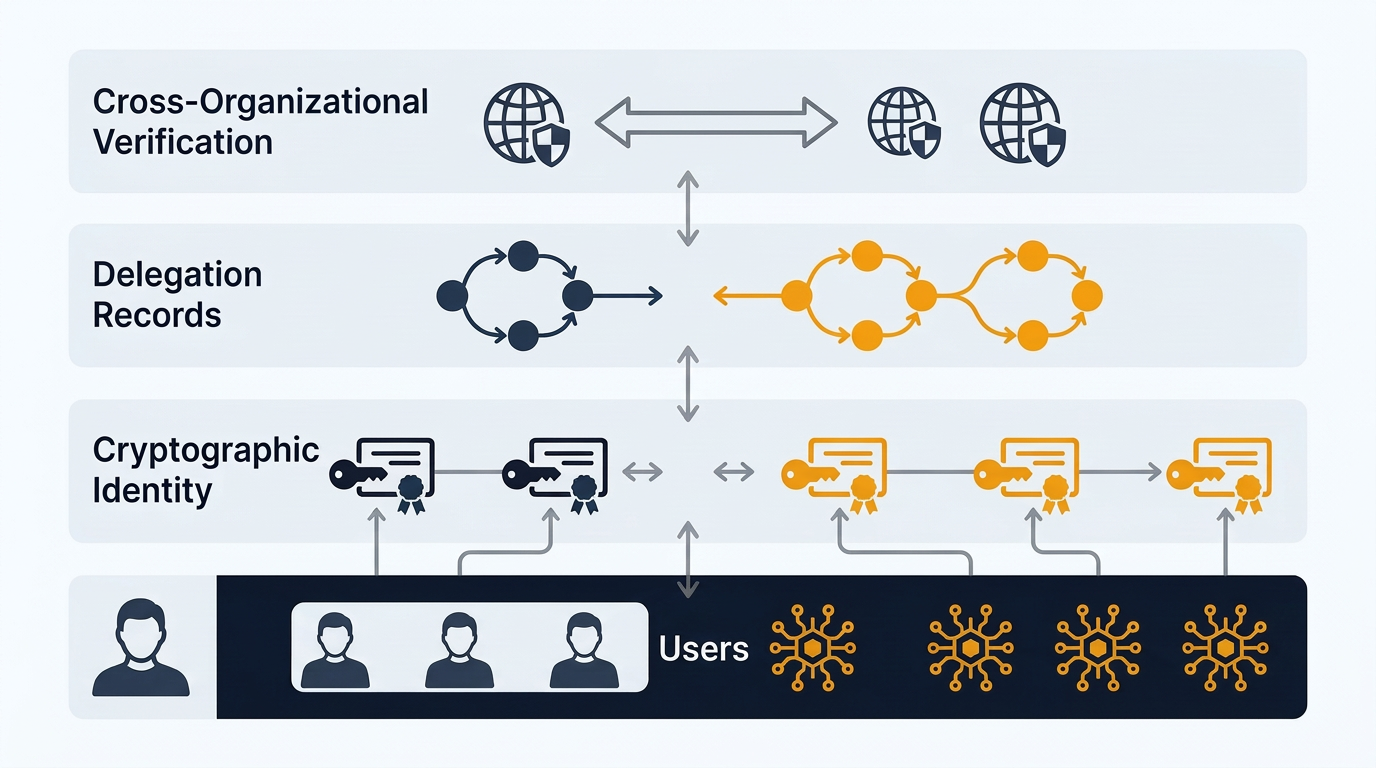

The security and identity industry is converging on a layered approach that treats agents as first-class organizational entities — with formal identities, defined lifecycles, and auditable authorization chains — rather than as informal automation bolted onto existing infrastructure.

The model has four components, each addressing a distinct governance need.

Organizational directories (the systems that track employees and their roles) must be extended to formally register agents as a separate category of organizational actor. Just as different countries issue different passports to their citizens — asserting a specific provenance and set of rights — agents must receive credentials that are structurally distinct from employee credentials, issued through a different process, and governed by different lifecycle rules. An agent that inherits an employee’s permissions, or an employee who operates under an agent’s credentials, represents a category failure with direct accountability consequences.3

Cryptographic identity gives each agent a verifiable, tamper-evident credential that proves what it is, when it was authorized, and when that authorization expires. Short-lived credentials — automatically renewed and automatically expired — ensure that the blast radius of any compromise is bounded in time. This is the equivalent of issuing time-limited contractor badges rather than permanent employee keycards: the expiry is a governance feature, not a limitation.4

Delegation records encode the authorization chain in a verifiable, portable form. When a human executive authorizes an agent to act on their behalf — or when one agent delegates a task to another — that authorization is recorded as a cryptographically signed document that travels with the agent and can be verified by any system the agent interacts with. The chain of accountability is preserved end-to-end, across organizational boundaries, without requiring every participant to share the same internal systems.5

Cross-organizational verification allows external partners, regulators, and auditors to verify an agent’s identity and authorization chain without requiring access to your internal infrastructure. This mirrors how the global email system works: organizations don’t need bilateral agreements to exchange verified messages; they rely on shared resolution infrastructure and cryptographic signing. The same principle, applied to agent identity, enables trustworthy agent-to-agent interactions across organizational boundaries at scale.6

The Human Question Remains the Hard One

Technology can enforce that an agent’s actions are traceable and authorized. It cannot, on its own, guarantee that a human being — with genuine judgment and accountability — was actually in the loop when it mattered. That boundary is under pressure from two directions.

On one side, the volume and speed of agentic activity means that requiring human approval for every action is operationally impossible. Organizations must make deliberate choices about which categories of action require explicit human authorization and which can proceed autonomously within defined guardrails.

On the other side, the technical signals used to confirm human presence — biometric verification, behavioral patterns, physical security gestures — are being challenged by advances in AI-generated synthetic identity. The honest assessment is that “confirmed human authorization” is becoming a probabilistic claim rather than an absolute one, supported by multiple independent signals rather than a single definitive proof.7

This has direct implications for governance: high-consequence decisions — material financial transactions, changes to access privileges, actions with regulatory significance — should require multiple independent signals of human authorization, not just a single credential check. The architecture supports this; the organizational policies to require it must be explicitly adopted.

What Boards and CEOs Should Be Asking

The questions that governance at the highest level should be pressing:

Do we have a complete inventory of every non-human identity operating in our environment? If the answer is uncertain, the governance gap is real and present.

Is there a clear owner for every agent? Ownership means accountability — someone whose employment and authority backs the agent’s actions.

Are our compliance frameworks written for a world with only human actors? Most are. Updating them to address agentic action is a near-term necessity, not a future consideration.

What categories of action require human authorization, and is that requirement technically enforced? Policy statements are insufficient; the enforcement must be architectural.

How would we respond if an agent took a harmful action — could we trace it, contain it, and demonstrate accountability to a regulator? If the answer involves significant uncertainty, the incident response capability is not ready for the agentic environment.

The Strategic Reality

The organizations that will navigate this transition most effectively are those that treat AI agent identity not as a technical implementation detail, but as a governance and accountability framework that requires the same executive attention as financial controls or legal compliance. The risk is not primarily that agents will be hacked — though that risk is real. The deeper risk is that organizations lose the ability to answer, with confidence, the question that has always been at the center of accountability: who decided this, and were they authorized to?

In the age of autonomous agents, that question has a new and more complex answer. Building the architecture to support it is the foundational work of the next several years.

Footnotes

-

2026 NHI Reality Report: 5 Critical Identity Risks — Why non-human identities outnumber humans in enterprise systems, and what to do about it. ↩

-

The End of Traditional SaaS: How AI Agents Are Redefining Business Applications — AI agents eliminate the need for a rigid application stack by directly querying and updating databases. ↩

-

How to Distinguish Between Human and Non-Human Identities — Human and non-human identities require structurally different authentication and governance approaches. ↩

-

Keyfactor Validates PKI-Based Identity for Securing Agentic AI — Applying PKI and certificate lifecycle automation to agentic AI workloads. ↩

-

Aries RFC 0104: Chained Credentials — Cryptographically signed delegation chains enable offline verification and powerful privilege delegation. ↩

-

Decentralized Identifiers Based Interoperability Architecture — How DID, VC, and VP concepts enable lightweight, scalable interoperability between organizations. ↩

-

What is Liveness Detection? — Multi-signal approaches to confirming human presence in an era of synthetic identity. ↩